Industry Benchmarks 2026: Critical Response Insights

Industry Benchmarks 2026 provide critical visibility into how organizations perform in detection and response. In today’s threat landscape, knowing your internal metrics is not enough. You must compare them against industry standards.

Without reliable benchmarks, detection and response times lack context. A 12-hour detection window may sound acceptable—until compared with leading organizations detecting threats within one hour.

This guide analyzes Industry Benchmarks 2026 for detection and response performance and explains what these numbers mean for security maturity.

Table of Contents

Why Industry Benchmarks 2026 Matter

Industry Benchmarks 2026 help organizations evaluate whether their detection and response metrics are competitive or lagging.

Benchmarks allow teams to:

- Compare SOC performance

- Identify operational bottlenecks

- Reduce dwell time

- Improve containment speed

- Strengthen executive reporting

For a deeper breakdown of how detection and response metrics interact:

🔗 Link: MTTD vs MTTR vs MTTC vs Dwell Time

Average MTTD in 2026

Mean Time to Detect (MTTD) measures how quickly an organization identifies malicious activity after compromise.

2026 MTTD Performance Ranges:

- High-maturity SOC: 30 minutes – 4 hours

- Average enterprise: 6 – 24 hours

- Low-maturity environments: 1–3 days

Organizations investing in automation and endpoint detection consistently outperform peers.

🔗 Link: Mean Time to Detect (MTTD)

Average MTTC in 2026

Mean Time to Contain (MTTC) reflects how quickly an organization isolates and prevents spread after detection.

2026 MTTC Performance Ranges:

- Advanced teams: under 4 hours

- Mid-tier maturity: 8 – 24 hours

- Lower maturity: 1–3 days

Rapid containment reduces lateral movement and operational impact.

🔗 Link: Mean Time to Contain (MTTC)

Average MTTR in 2026

Mean Time to Respond (MTTR) measures complete remediation and system restoration.

2026 MTTR Performance Ranges:

- Mature programs: 1–3 days

- Average enterprise: 3–7 days

- Complex ransomware cases: 2+ weeks

Recovery time directly influences business continuity costs.

🔗 Link: Mean Time to Respond (MTTR)

Average Dwell Time in 2026

Dwell time measures how long attackers remain undetected inside a network.

2026 Dwell Time Averages:

- Global average: 10–16 days

- Top-performing organizations: under 5 days

- High-risk industries: 20+ days

Reducing dwell time is one of the strongest indicators of security maturity.

🔗 Link: Dwell Time in Cybersecurity

🌍 External

These 2026 benchmark findings align with findings from major cybersecurity reports:

- IBM Cost of a Data Breach Report

- Mandiant M-Trends Report

- Verizon Data Breach Investigations Report (DBIR)

These large-scale studies provide global incident response insights.

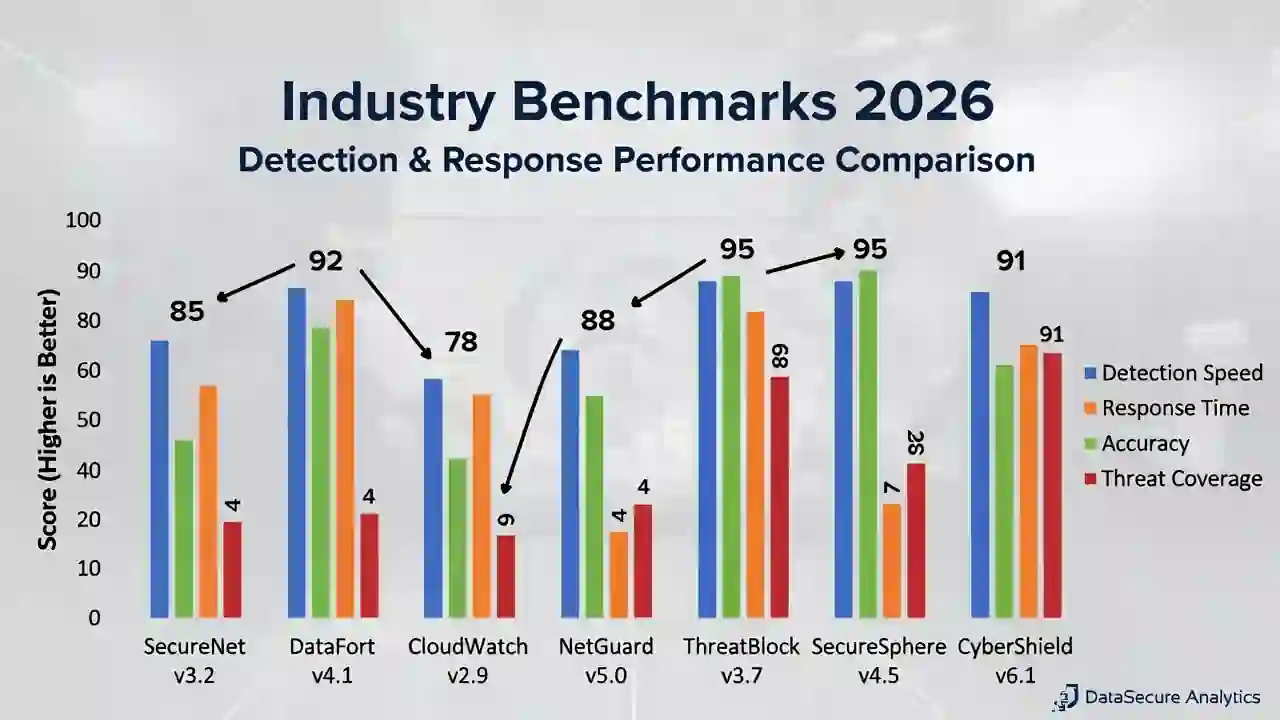

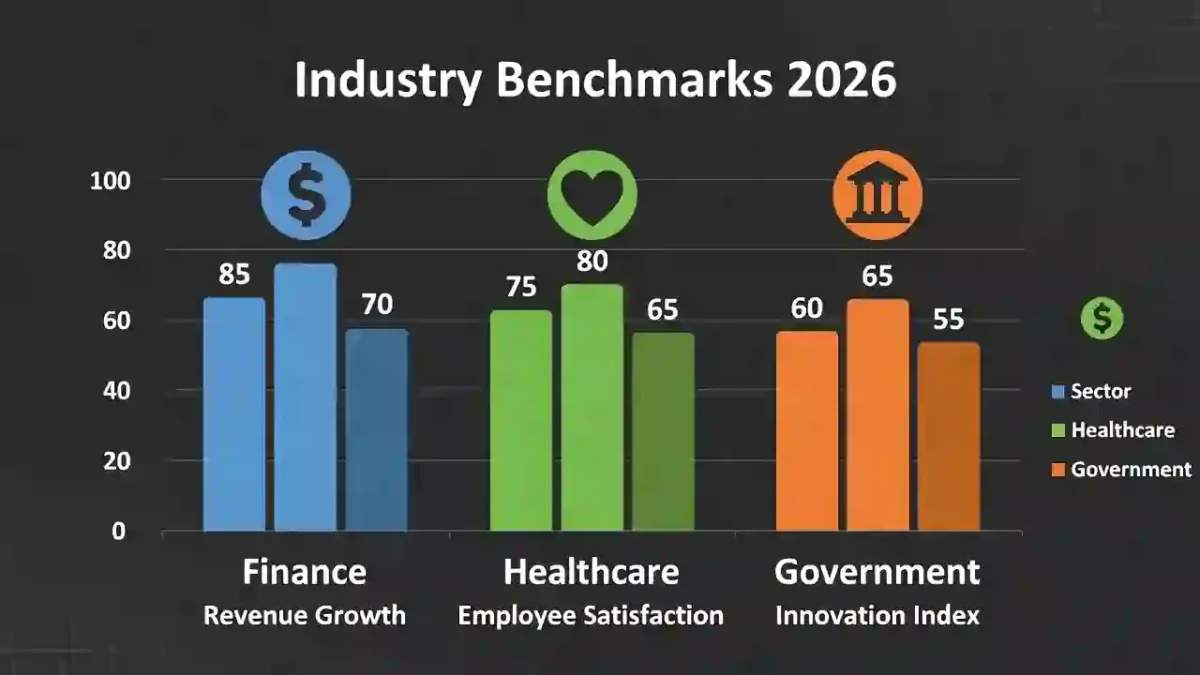

Industry Differences in 2026

Detection and response times vary significantly across sectors.

| Industry | Detection Speed | Response Speed |

|---|---|---|

| Finance | Fast | Fast |

| Healthcare | Moderate | Moderate |

| Government | Improving | Moderate |

| Manufacturing | Slower | Slower |

Regulated sectors often demonstrate faster detection due to compliance pressure and mandatory reporting frameworks.

Factors That Influence Detection Performance

Several operational variables influence Industry Benchmarks 2026 results:

- SOC staffing levels

- Automation and SOAR tools

- EDR/XDR coverage

- Threat intelligence integration

- Incident simulation frequency

- Playbook maturity

Organizations with strong automation consistently reduce alert fatigue and improve response speed.

How to Improve Your 2026 Benchmarks

To align with Industry Benchmarks 2026 leaders:

- Implement continuous monitoring

- Automate alert triage

- Conduct red team exercises

- Improve endpoint visibility

- Strengthen incident response playbooks

For lifecycle alignment:

🔗 Link:

Cyber Attack Lifecycle Timeline

Final Thoughts

Industry Benchmarks 2026 provide critical detection insights that help organizations measure security maturity objectively.

Reducing MTTD, MTTC, MTTR, and dwell time strengthens resilience and limits breach impact. Benchmarking is not about chasing perfection—it is about continuous, measurable improvement.

Organizations that track and compare performance consistently build stronger and more adaptive cybersecurity programs.