Data Breach Timeline Template: 9 Critical Response Steps

A serious breach rarely begins with a complete picture. More often, it starts with fragments: an alert that looks unusual, a user complaint, a vendor warning, or activity that only becomes suspicious after someone checks the logs twice. In those first hours, the biggest risk is often not a lack of effort. It is a lack of structure.

That is where a data breach timeline template becomes useful.

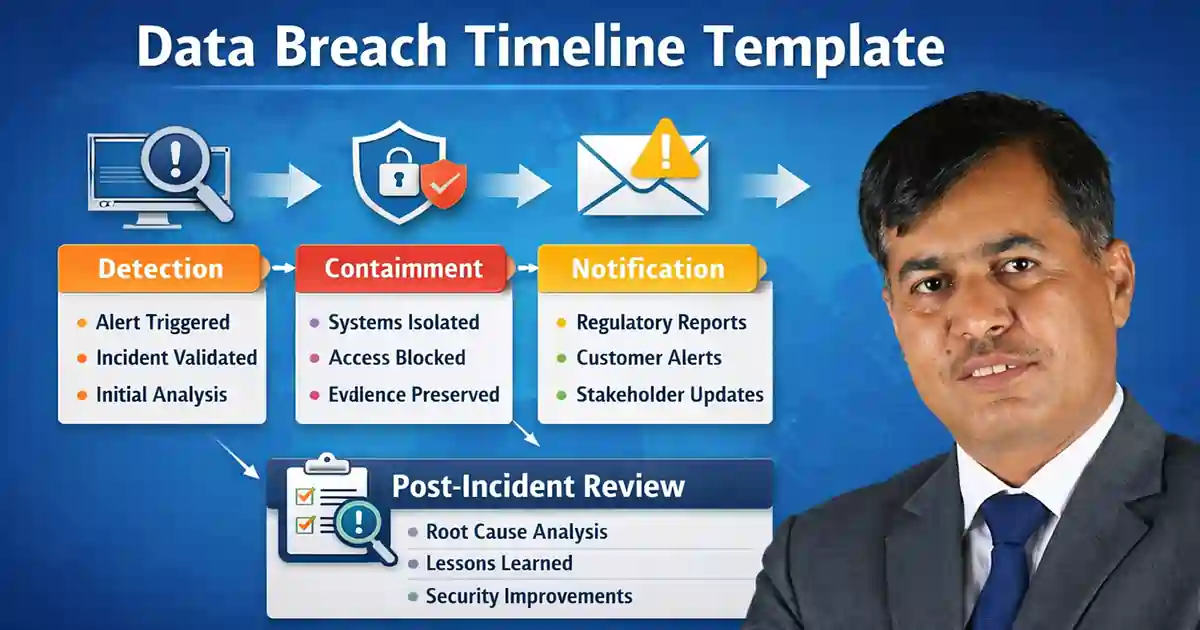

A practical timeline is not just an administrative record. It gives the incident response team a shared chronology of what was discovered, when it was discovered, which actions were taken, who approved them, and what still remains unresolved. That kind of record helps security, legal, leadership, and communications teams work from the same facts instead of piecing events together from memory later. Modern guidance from NIST and CISA still treats incident response as a disciplined lifecycle built around detection, response, recovery, and continuous improvement.

For organizations operating in the US, the UK, or across multiple regions, the overall flow is usually familiar. The incident is detected, contained, investigated, assessed for reporting obligations, recovered, and then reviewed. What changes from one jurisdiction to another is usually the reporting logic, not the need for a clear operational record. The UK ICO continues to stress both risk assessment and timely breach reporting where required, while US reporting obligations may vary by state, sector, or rule set.

Table of Contents

Why a Breach Timeline Matters

During a live incident, teams are usually busy. Security is reviewing alerts, infrastructure is isolating systems, legal is trying to establish when the organization became aware of the event, and leadership wants updates before the investigation is even complete. Without a clean record, those parallel workstreams quickly create confusion.

A timeline solves a simple problem: sequence. It shows what happened first, what was later confirmed, and which actions were taken based on the information available at the time. That is useful operationally, but it also matters later for executive review, insurance discussions, customer communication, and compliance analysis.

If your team is already measuring response performance, this section is a natural place to reference SOC Efficiency Metrics 2026 and Reduce Detection Time Regulatory Deadlines Risk. Both support the same point: the faster a team detects and organizes a response, the more room it has to make sound decisions under pressure.

What to Include in a Data Breach Timeline Template

A good data breach timeline template does not need to be elaborate. It needs to be usable. In most cases, the timeline should capture the first alert, the validation point, the affected systems or accounts, the likely data involved, containment actions, legal review milestones, notification decisions, recovery work, and final lessons learned.

Think of it less as a static form and more as a running case history. The strongest templates are updated as the incident evolves. They are not reconstructed days later from scattered notes. That is one reason incident-response frameworks emphasize disciplined documentation rather than informal memory or ad hoc chat logs. Official guidance from NIST supports exactly that structured approach.

Detection

Detection begins when something suggests unauthorized access, exposure, misuse, or exfiltration may have occurred. Sometimes the signal is obvious. Often it is not. It may be an outbound transfer anomaly, suspicious administrator behavior, a vendor warning, repeated login failures, or a customer report that does not make sense yet.

At this stage, the priority is not to sound certain. It is to document early facts accurately.

What to record during detection

- exact time the event was first observed

- source of the alert or report

- analyst or team handling the first review

- affected system, application, account, or environment

- early indicators of compromise

- initial severity estimate

- whether personal, financial, or credential data may be involved

What usually matters most early

- confirm whether the issue is a genuine incident

- open an incident record immediately

- assign an owner

- preserve logs and evidence

- notify the right internal stakeholders based on severity

This is a good spot for internal links that deepen the reader’s understanding without feeling forced. A sentence about detection pressure can naturally point to SOC Efficiency Metrics 2026, especially if you are explaining why lower mean time to detect improves both containment and reporting readiness.

Example timeline entry

09:12 UTC – Monitoring alert triggered for unusual outbound activity from internal repository.

09:26 UTC – Security analyst validated suspicious behavior and opened incident case.

09:41 UTC – Incident owner assigned. IT and legal informed.

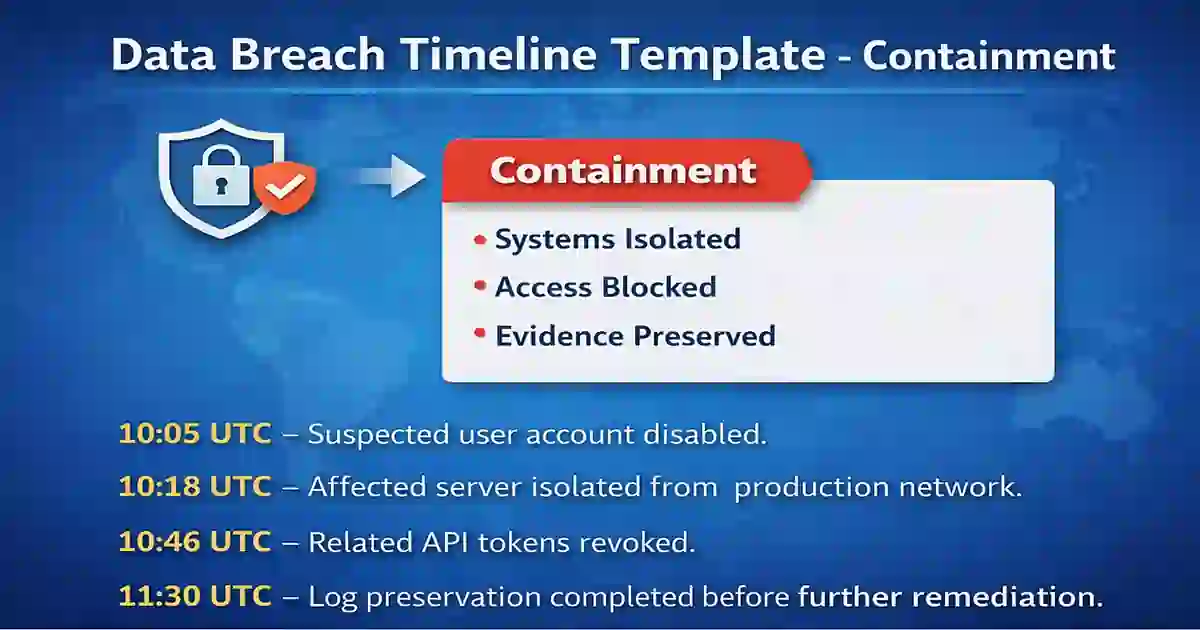

Containment

Once the incident appears credible, the focus shifts to containment. The goal is to stop further harm without destroying the evidence needed to understand what happened.

Containment is often where pressure rises. Teams want to act quickly, but quick action can also erase logs, interrupt production unnecessarily, or make later reconstruction harder. That is why the timeline matters so much here: it preserves the reasoning behind each action.

What to record during containment

- accounts disabled or reset

- systems isolated

- tokens or sessions revoked

- cloud or SaaS access restricted

- vendor escalations made

- evidence preserved before major changes

- approvals for disruptive response actions

Common containment actions

- disable suspected compromised credentials

- isolate affected hosts or services

- block suspicious traffic or remote access

- preserve audit trails and forensic evidence

- review third-party integrations

- document each action with the exact time it occurred

This section is a natural place to add internal links to Okta Security Checklist and Credential Stuffing vs Password Spraying. If identity abuse or stolen credentials were part of the initial access path, those pieces give readers useful background without breaking the flow.

Example timeline entry

10:05 UTC – Suspected user account disabled.

10:18 UTC – Affected server isolated from production network.

10:46 UTC – Related API tokens revoked.

11:30 UTC – Log preservation completed before further remediation.

Assessment

After immediate containment, the team needs to understand scope. What data was touched? Was it merely exposed, or is there evidence that it was copied or removed? Which business units were affected? Which jurisdictions may matter?

This is often the phase where early assumptions change. A credible timeline should show that honestly. Good incident records evolve as evidence improves.

What to record during assessment

- confirmed affected systems and accounts

- likely time window of unauthorized access

- categories of data involved

- estimated number of affected records or individuals

- whether exfiltration is confirmed or still suspected

- operational impact

- jurisdictions involved

- relevant third-party dependencies

If you want to connect this part to governance, link naturally to Board-Level Cybersecurity Metrics Guide. It fits well when explaining that containment speed, dwell time, and reporting readiness are no longer just SOC metrics; they also matter to leadership oversight.

Example timeline entry

Day 1, 15:20 UTC – Preliminary review suggests unauthorized access to internal document repository.

Day 1, 18:40 UTC – Investigation indicates employee and customer contact data may be affected.

Day 2, 09:10 UTC – Scope estimate updated after cloud audit log review.

Day 2, 12:30 UTC – Legal and privacy review initiated.

Notification

Notification is where incident response becomes especially sensitive. It sits at the intersection of technical evidence, legal judgment, timing, and public trust.

Not every incident will require regulator or customer notification. But when notification is required, a poor record can create unnecessary exposure. The UK ICO says certain personal data breaches must be reported without undue delay and, where feasible, within 72 hours of awareness, while also making clear that not every personal data breach is automatically reportable. European guidance follows a similar risk-based logic. In the US, notification duties are often shaped by state law, sector requirements, contracts, or the specific data involved.

What to record during notification

- when internal awareness was established

- facts confirmed at the time of review

- categories of data involved

- likely risk to affected individuals

- whether notification thresholds were met

- who approved the decision

- which parties were notified and when

- what follow-up communication was planned

This section is where internal links to UK Incident Reporting Rules and CIRCIA Rulemaking Tracker 2026 fit naturally. For external references, use official links such as NIST incident response guidance, CISA incident response resources, UK ICO personal data breach guidance, and EDPB breach notification guidance. Those are strong, relevant, and appropriate as standard dofollow editorial references.

Example timeline entry

Day 2, 15:40 UTC – Privacy and legal review completed.

Day 2, 17:10 UTC – Leadership approved external notification plan.

Day 3, 08:05 UTC – Required notices submitted.

Day 3, 13:15 UTC – Customer communication finalized and distributed.

Recovery

Recovery begins when the organization moves beyond immediate damage control and starts restoring operations in a controlled way.

That means more than turning systems back on. Recovery should include confirming that the access path has been closed, compromised credentials have been reset, vulnerable settings have been corrected, and the environment is under closer monitoring than before.

What to record during recovery

- systems restored

- backups used and validation results

- credentials reset

- patches or configuration changes applied

- additional monitoring enabled

- vendor remediation completed

- business approval for return to service

- remaining risks under review

This is the right place to insert internal links to Patch Management SLA Template, Secure by Design Checklist Vendors, and Public-Facing Application Security Checklist. Those pieces support the point that recovery should lead into remediation and stronger controls, not just system restoration.

Example timeline entry

Day 4, 11:00 UTC – Clean restoration of affected repository completed.

Day 4, 16:45 UTC – MFA enforced for privileged users in impacted environment.

Day 5, 10:30 UTC – Enhanced outbound monitoring enabled.

Day 5, 14:20 UTC – Operations leadership approved controlled return to service.

Post-Incident Review

The incident should not end when the environment becomes stable. A useful response process includes a formal review after the urgent work is done.

This is where the organization asks harder questions. What failed? What worked? Which decisions were delayed by weak visibility or incomplete documentation? Which controls should change before the next incident?

Include these items in the review section

- root cause

- control gaps

- timeline gaps or documentation problems

- notification outcomes

- vendor performance

- policy or process changes

- training needs

- evidence retention decisions

- final closure date

A timeline is especially valuable here because it lets the organization review what actually happened instead of relying on reconstructed memory.

Copy-and-Use Data Breach Timeline Template

Below is a practical format you can paste into a document, playbook, or tracking sheet.

Incident Details

- Incident ID:

- Date opened:

- Incident owner:

- Business unit:

- Detection source:

- Initial severity:

Detection

- Time suspicious activity first observed:

- Time incident validated:

- Systems or accounts involved:

- Type of data potentially affected:

- Evidence preserved:

Containment

- Accounts disabled:

- Systems isolated:

- Tokens or sessions revoked:

- Logs or forensic evidence collected:

- Vendors engaged:

- Temporary controls applied:

Assessment

- Confirmed scope:

- Estimated affected users or records:

- Jurisdictions involved:

- Business impact:

- Legal or privacy review started:

- Current confidence level in findings:

Notification

- Notification required: Yes / No

- Regulator notification required: Yes / No

- Customer notification required: Yes / No

- Insurer notified: Yes / No

- Law enforcement involved: Yes / No

- Approval owner:

- Notices sent at:

Recovery

- Systems restored:

- Credentials reset:

- Security fixes applied:

- Monitoring improvements added:

- Recovery approved by:

- Remaining issues under review:

Post-Incident Review

- Root cause:

- Control weaknesses identified:

- Lessons learned:

- Follow-up actions:

- Process changes:

- Closure date:

Final Notes

A data breach timeline template is not just an internal form. Used properly, it becomes the backbone of the incident record.

The best version is simple enough to maintain under pressure, but detailed enough to support investigation, communication, legal review, and recovery. It should help the organization understand not only what happened, but how the response unfolded and where the next improvements need to be made.

When the situation is moving fast, clarity becomes a control in its own right. That is what a good timeline provides.