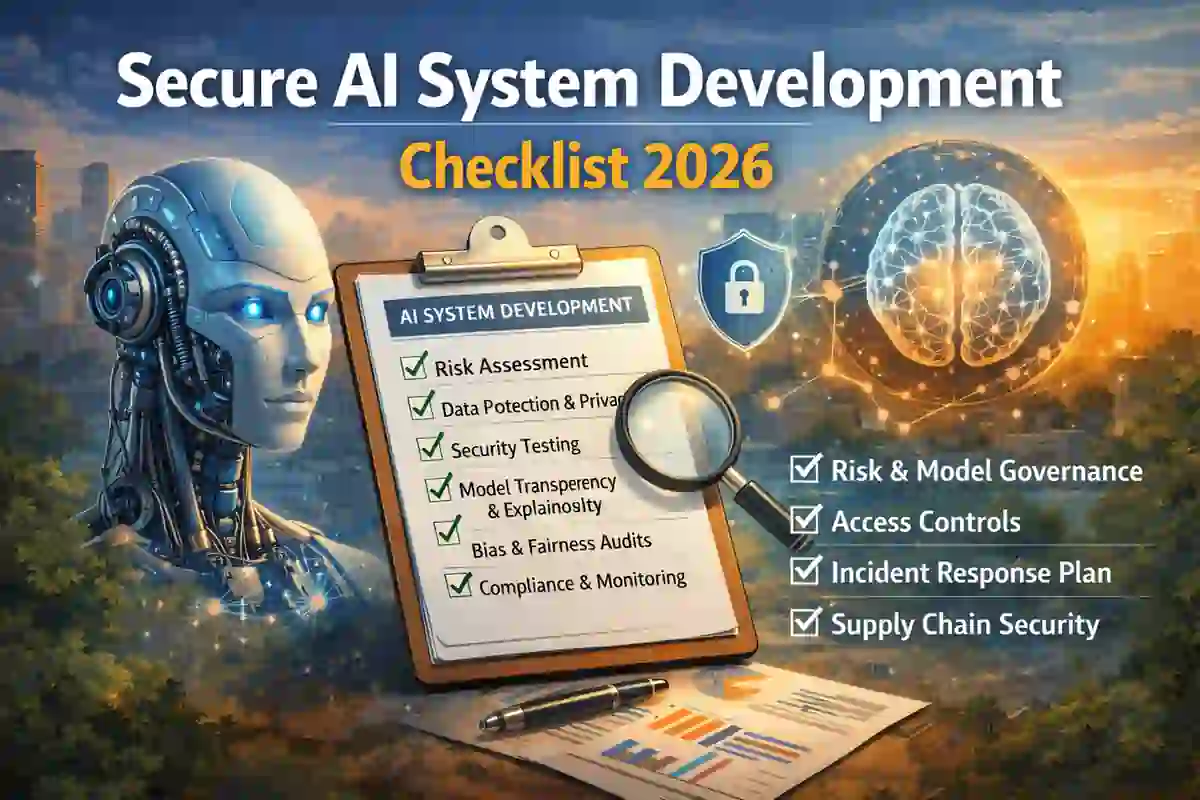

Secure AI System Development Checklist 2026: 15 Proven Steps

Secure AI system development checklist planning should begin before any model is trained, connected, or released to users. In 2026, organizations building AI copilots, internal assistants, retrieval systems, and agentic workflows need to manage not only classic application security problems, but also AI-specific threats such as prompt injection, insecure output handling, training-data poisoning, over-permissioned tools, and weak governance. NIST says its AI Risk Management Framework is designed to help organizations incorporate trustworthiness into the design, development, use, and evaluation of AI systems, and NIST’s Generative AI Profile adds actions tailored to generative AI risks.

Table of Contents

Why a secure AI system development checklist matters in 2026

A strong secure AI system development checklist helps teams move from vague “AI safety” ideas to practical engineering controls.

That matters because AI systems increasingly influence support operations, fraud analysis, incident response, code generation, workflow automation, and decision support. The EU AI Act is presented by the European Commission as the first comprehensive legal framework on AI and is intended to foster trustworthy AI in Europe, which means secure development increasingly overlaps with compliance for organizations serving EU users or markets.

For publishers and security teams targeting the USA, UK, and Europe, a secure AI system development checklist is now part of responsible SDLC practice. It helps organizations control data access, define approval boundaries, monitor model behavior, and reduce legal and operational risk before deployment.

Secure AI System Development Checklist: 15 Proven Steps

1. Define the AI system’s purpose, risk level, and boundaries

Start your secure AI system development checklist by documenting what the AI is allowed to do, what it must never do, who can use it, which systems it can access, and which decisions require human review.

This prevents dangerous scope creep. It also makes later controls easier to design because the team knows whether the AI is informational, assistive, autonomous, or operationally sensitive.

2. Classify data before training or connecting it

Before a model is trained, fine-tuned, or connected to retrieval sources, classify the data involved.

Document whether the system can access source code, internal documents, credentials, customer records, regulated personal data, or confidential business information. Secure AI development gets much harder when sensitive data is connected first and governed later.

3. Apply least privilege to model access and tools

If the AI can call APIs, browse internal systems, read documents, or trigger actions, apply strict least privilege.

Give the model and its surrounding services only the permissions they need. OWASP’s guidance on agentic AI application security focuses on practical controls for the design, development, deployment, and operation of agentic AI systems, which is especially important when AI can take actions instead of only generating text.

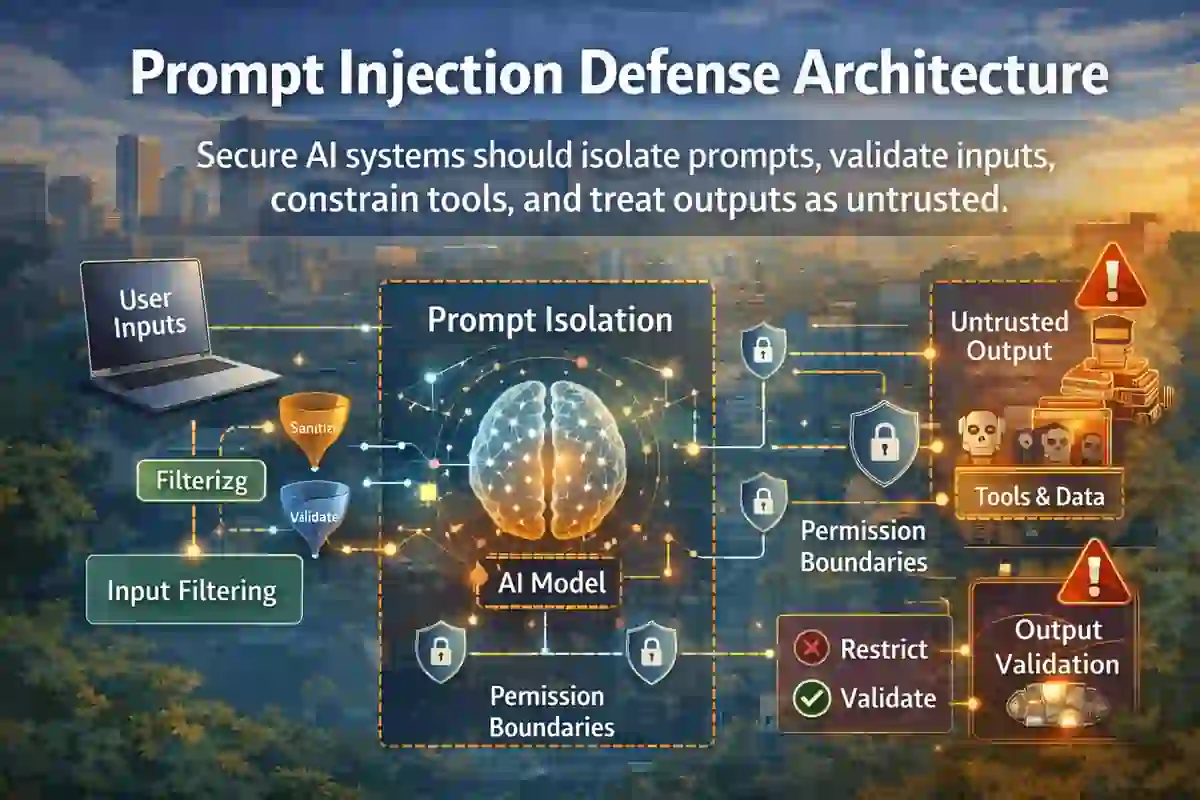

4. Threat model prompt injection early

Every secure AI system development checklist should include prompt injection analysis before launch.

OWASP’s LLM Top 10 project defines prompt injection vulnerabilities as cases where prompts alter LLM behavior or output in unintended ways. These attacks can come from direct user input or from hidden instructions inside external content that the model processes.

Threat modeling should cover:

- direct user prompts

- retrieved documents

- tool results

- system prompts

- memory layers

- plug-ins and external content

5. Validate and constrain model outputs

AI outputs should never be trusted by default.

Treat responses as untrusted content unless validated. Sanitize outputs before they reach code execution, markdown rendering, automation workflows, customer emails, or downstream APIs. This reduces the risk of unsafe output handling and secondary injection chains described in OWASP’s LLM application guidance.

6. Separate prompts, policies, and secrets

Do not mix system prompts, operational rules, credentials, and business logic in one exposed layer.

Store secrets outside prompts, isolate control instructions, and avoid leaking hidden policies through logs or debugging views. A serious secure AI system development checklist assumes determined users will try to extract instructions, override policies, or infer internal logic.

This is also the right place to connect AI engineering to security operations. For example, your internal guide on AI and Incident Response Automation explains how AI can speed triage and response, but only when governance and control boundaries are properly designed.

7. Protect training, tuning, and retrieval data from poisoning

A complete secure AI system development checklist should address data poisoning and retrieval-layer abuse.

Review where training, fine-tuning, evaluation, and retrieval data comes from. Confirm who can edit it, how changes are logged, how data quality is reviewed, and how suspicious records are flagged. Even if you do not train a foundation model yourself, a compromised retrieval layer can still poison outputs.

8. Secure the AI software supply chain

Review model vendors, APIs, SDKs, embeddings pipelines, orchestration frameworks, plug-ins, vector stores, and deployment dependencies.

If your AI stack depends on third-party providers, document supplier risk, update paths, outage impact, audit visibility, and security commitments. This step lines up well with your site’s broader focus on detection and resilience. A useful internal link here is How to Reduce Cybersecurity Detection Time, because visibility into supplier and platform behavior directly affects how quickly security teams detect compromise.

9. Add human approval for high-impact actions

Do not allow AI to take irreversible or high-risk actions without review.

Payments, access approvals, production changes, account deletions, legal responses, and customer notifications should require human approval gates. A practical secure AI system development checklist should make clear which actions are assistive and which require authorization.

10. Log prompts, tool calls, model choices, and decisions

Strong logging is essential for investigations, abuse detection, governance, and debugging.

Capture prompts, retrieved sources, model versions, tool invocations, policy hits, user approvals, and configuration changes. This makes AI incidents easier to reconstruct and reduces investigation delays. A strong internal link here is Mean Time to Respond: 5 Proven Ways to Reduce Risk, because logging quality directly affects response speed and containment efficiency.

11. Monitor for drift, misuse, and abnormal behavior

Your secure AI system development checklist should not stop at deployment.

Track unsafe outputs, abnormal tool usage, repeated prompt injection attempts, suspicious retrieval patterns, hallucination spikes, and access anomalies. Post-launch monitoring matters because AI risk changes as prompts, users, tools, and dependencies evolve.

You can also reinforce this topic cluster with AI Reducing Breach Detection Time 2026, which fits naturally with AI monitoring and faster detection workflows.

12. Build AI-specific incident response playbooks

When an AI system fails, the incident may involve unsafe outputs, hidden prompt injection, data leakage, tool misuse, or flawed automation.

Prepare playbooks for model rollback, traffic throttling, retrieval shutdown, connector disablement, secret rotation, and customer communication. This is a strong place to link to Cybersecurity Incident Response Timeline and Ransomware Detection Timeline, because even in AI-enabled incidents, detection and response speed still determine operational impact.

13. Document evaluation and red-team testing

Before launch, test the system against realistic misuse.

That includes prompt injection, hidden instruction attacks, jailbreak attempts, policy evasion, malicious file ingestion, sensitive data extraction, over-permissioned tool usage, and unsafe automation. Testing should be repeated whenever prompts, models, tools, or retrieval sources change.

14. Map regulatory and governance requirements

A mature secure AI system development checklist includes legal mapping, not only technical controls.

For Europe, the AI Act creates a risk-based legal framework for AI systems. For US public companies, AI-related incidents may still create disclosure and governance pressure under broader cybersecurity expectations. On your site, SEC Cyber Rule Timeline 2026 is the best internal link here because it connects technical failure to executive reporting and materiality review.

For broader US incident reporting context, you can also reference CIRCIA 72-Hour Reporting Rule where relevant to critical infrastructure workflows.

15. Reassess continuously after every major change

The best secure AI system development checklist is continuous.

Reassess after model swaps, prompt changes, new tools, new APIs, fine-tuning updates, policy changes, new user groups, or regional rollout changes. Controls that were sufficient last quarter may already be outdated.

US, UK, and Europe compliance considerations

For the United States, secure AI development should align with broader governance, supplier oversight, detection readiness, and escalation processes. If an AI-related event creates operational disruption, sensitive data exposure, or a material cybersecurity incident, governance and reporting timelines may become relevant quickly. Your internal guide on Incident Response Deadlines US UK is a strong supporting link here.

For the United Kingdom, organizations should pay close attention to privacy, processor relationships, logging, and incident handling whenever AI systems process personal or confidential information. The same controls that reduce prompt injection and data leakage also improve defensibility under governance review.

For Europe, the AI Act is central because it establishes a formal risk-based framework for AI across the EU and is specifically intended to foster trustworthy AI. Organizations serving Europe should treat secure AI engineering as part of compliance readiness, not just technical hygiene.

Final thoughts

A strong secure AI system development checklist for 2026 helps organizations build AI systems that are useful, resilient, monitorable, and easier to govern.

If you define system boundaries, control data access, defend against prompt injection, secure the AI supply chain, monitor abnormal behavior, and prepare AI-specific response playbooks, you reduce both technical and regulatory risk. That makes this secure AI system development checklist a strong pillar post for CybersecurityTime.com and a good search target for readers across the USA, UK, and Europe.